Agentic vs. Generative AI: A Decision Framework for Enterprise Leaders

The AI vendor ecosystem has a strong incentive to make every use case sound like it needs the most sophisticated architecture available. In practice, a large share of enterprise AI value comes from well-implemented generative AI on a clean RAG architecture, with no agents required. The converse is also true: some problems are structurally unsuited to generation and need agents from the start.

This framework is how EFS AI approaches the question in client engagements. It's deliberately opinionated — our goal is to match architecture to problem, not to sell complexity.

Defining the Categories

Generative AI

Generative AI systems take an input and produce an output. The system does not take autonomous actions. It does not call external APIs unless explicitly invoked by application code. It answers, summarizes, classifies, or generates — and then stops.

Agentic AI

Agentic AI systems plan, execute, and adapt across multiple steps. An agent receives a goal and autonomously determines the sequence of actions required to achieve it — calling APIs, querying databases, running calculations, updating records, and invoking other AI models as tools.

Hybrid

Many enterprise AI implementations are hybrid: generative AI provides language understanding and output quality, while agentic orchestration handles sequencing, tool selection, and action execution.

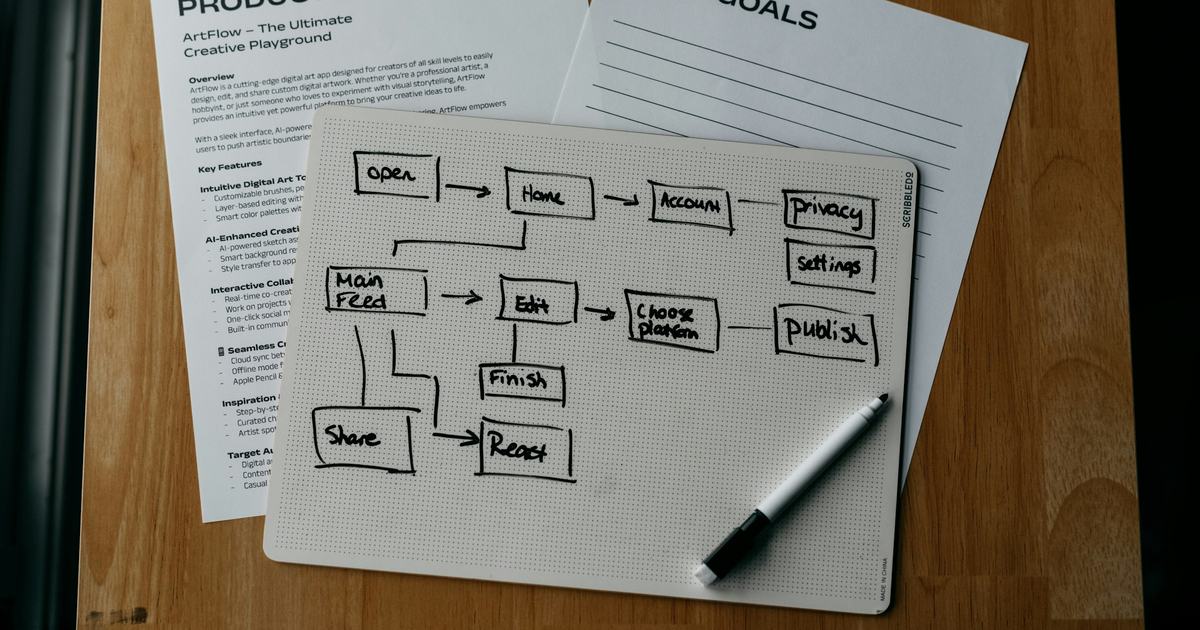

Decision Matrix

| Question | Points to Generative | Points to Agentic |

|---|---|---|

| Does the task require taking action in an external system? | No — output only | Yes — write to database, call API, update record |

| Is the task sequence fixed and predictable? | Yes — deterministic flow | No — depends on intermediate results |

| Can a human review every AI output before it has effect? | Yes — human in the loop is practical | No — volume or latency makes it impractical |

| Does the task involve a single input to single output? | Yes — one-shot response | No — multi-hop, iterative, or conditional |

| Does the task require synthesizing information from multiple live systems? | No — static corpus retrieval is sufficient | Yes — real-time data from multiple APIs |

| Is the cost of an autonomous error recoverable? | Yes — low cost, reversible | Depends — requires confidence gating if errors are costly |

| Does the task require memory or state across multiple interactions? | No — stateless generation is sufficient | Yes — long-running workflows, multi-session context |

EFS Case Studies Mapped to the Framework

Generative AI: Clinical Documentation with HIPAA Controls

A mid-market healthcare provider needed to reduce manual effort for patient intake form processing and clinical note classification. The task was well-defined, the input corpus was relatively stable, and clinical staff reviewed AI outputs before they entered the electronic health record. This was a clean generative AI use case on AWS Bedrock with a RAG architecture. The system achieved high accuracy in automated document classification and 78% reduction in intake processing time. Agents would have added cost and latency with no benefit.

Agentic AI: EDI Error Detection and Correction

A national manufacturer was processing thousands of EDI transactions daily. The task required: reading the incoming EDI file, classifying the error type, querying an internal product catalog, applying the correction, updating the order management system, and deciding whether to act autonomously or escalate based on confidence. This is a structural agentic problem — it requires action across multiple systems and conditional logic based on intermediate results. The confidence-gated agent (see how confidence gating works) delivered 94% reduction in EDI processing errors.

Hybrid: GenAI HR Assistant

An enterprise HR team needed an AI assistant for benefits, PTO policy, and onboarding questions. Most questions were answerable from a RAG corpus. But some required looking up the employee's specific plan and checking their remaining PTO balance in the HRIS. The generative layer handled document Q&A via a RAG architecture; the agentic layer handled authenticated HRIS queries. This hybrid architecture kept costs low while enabling personalized responses.

Agentic AI: Voice Agent for Customer Service

An enterprise client needed to automate common inquiry types — order status, appointment scheduling, basic account changes — representing 60%+ of inbound call volume. The latency and sequence requirements of a voice interaction, combined with the need to take live actions in backend systems, made this an agentic architecture from the start.

The Most Common Mismatches

Over-engineering with agents: Teams reach for agents because agents sound more capable, then spend months debugging non-deterministic planning behavior for a use case that a RAG pipeline with good re-ranking would have solved in weeks. If your task is "answer questions from our documentation," you do not need an agent.

Under-engineering with generation: Teams try to solve multi-step operational workflows with prompt engineering on a generative model — chaining together prompts with no formal state management or error handling. This works in demos. It breaks at production volume.

A Practical Starting Point

- List your candidate use cases — don't start with architecture, start with problems worth solving

- Run each through the decision matrix

- Scope a generative pilot first for knowledge-base use cases — faster to deliver, faster to prove value, lower risk

- Scope agentic architectures only for use cases where the decision matrix genuinely points there

- Plan for hybrid from the start — even if you launch with generative-only, design the architecture to accommodate agentic extensions

Disclaimer: AI performance varies by data quality and use case. Metrics cited are from production deployment validation. Estimated outcomes will vary based on organizational context and implementation specifics. EFS designs infrastructure and implements controls aligned with HIPAA and related frameworks. Ultimate compliance responsibility rests with the client organization.

Disclaimer: AI performance varies by data quality and use case. Metrics cited are from production deployment validation. Actual results will vary based on data quality, workflow configuration, and organizational context. EFS designs infrastructure and implements controls aligned with HIPAA and related frameworks. Ultimate compliance responsibility rests with the client organization. We do not provide legal advice — consult qualified legal counsel for regulatory interpretation. AWS and other third-party platforms referenced have their own compliance certifications and shared responsibility models.

Frequently Asked Questions

What is the difference between agentic AI and generative AI?

Generative AI takes an input and produces an output — it answers, summarizes, classifies, or generates content, then stops. Agentic AI plans, executes, and adapts across multiple steps — it autonomously determines the sequence of actions required to achieve a goal, calling APIs, querying databases, and updating records along the way.

Can I use both generative and agentic AI in the same system?

Yes — and most production enterprise AI implementations are hybrid. The generative layer provides language understanding and output quality, while the agentic orchestration handles sequencing, tool selection, and action execution. EFS designs for hybrid from the start, even when launching with generative-only capabilities.

How do I know if my use case needs an agent?

If the task requires taking action in an external system, depends on intermediate results to determine the next step, or synthesizes information from multiple live systems — it points to agentic architecture. If it is a single input to single output with a human reviewing before the output has effect, generative AI is likely sufficient and faster to deploy.

Let's talk about what you're building.

Our team brings over two decades of experience to every engagement. Tell us about your project and we'll show you what's possible.

Related

How Confidence Gating Makes AI Safe for Enterprise Decisions

How confidence gating prevents autonomous AI from making bad decisions in production — with EDI automation and HIPAA workflow examples from EFS.

RAG Architecture Patterns on AWS Bedrock: Naive, Advanced, and Agentic

Compare naive, advanced, and agentic RAG on AWS Bedrock — embedding models, vector stores, chunking strategies, and when to use each. See the framework.

AI Governance in Regulated Industries

How production AI governance works in HIPAA and SOC 2 environments — model controls, data classification, audit trails, and incident response.